本地运行模型

应用场景

像 llama.cpp、Ollama、GPT4All、llamafile 等项目受欢迎,凸显了在本地(在您自己的设备上)运行大型语言模型的需求。

这至少有两个重要优势:

Privacy: 您的数据不会发送给第三方,也不会受到商业服务条款的约束Cost: 无推理费用,这对需要大量令牌的应用程序非常重要(例如,长时间运行的模拟、摘要生成)

概览

本地运行大型语言模型需要一些条件:

Open-source LLM: 一个开源的大型语言模型,可自由修改和共享Inference: 在您的设备上运行此大型语言模型,且延迟可接受

开源大型语言模型

用户现在可以访问日益增长的大量开源大语言模型。

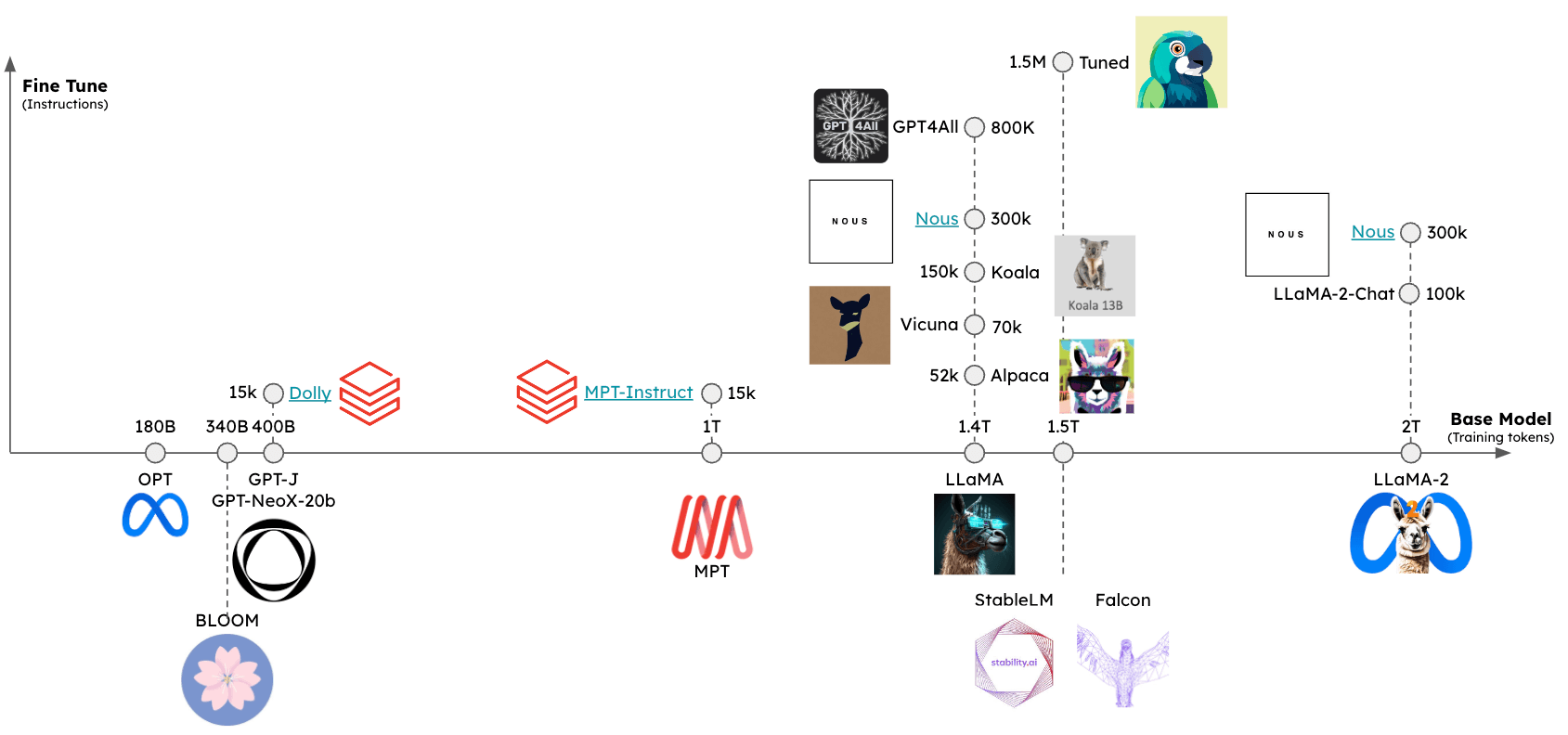

这些大型语言模型可以从至少两个维度进行评估(参见下图):

Base model: 基础模型是什么,它是如何训练的?Fine-tuning approach: 基础模型是否经过微调?如果进行了微调,使用了哪些指令集?

这些模型的相对性能可以通过多个排行榜进行评估,包括:

推理

一些框架已经出现,以支持在各种设备上对开源大语言模型进行推理:

llama.cpp: C++ 实现的 llama 推理代码,支持 权重优化 / 量化gpt4all: 用于推理的优化C后端Ollama: 将模型权重和环境打包为一个可在设备上运行并提供大语言模型服务的应用程序llamafile: 将模型权重和运行模型所需的所有内容打包到一个文件中,使您只需从该文件本地运行LLM,无需任何额外的安装步骤

通常情况下,这些框架会完成几项任务:

Quantization: 减少原始模型权重的内存占用Efficient implementation for inference: 支持在消费级硬件上进行推理(例如,CPU 或笔记本电脑GPU)

特别是,请参阅这篇精彩的文章,了解量化的重要性。

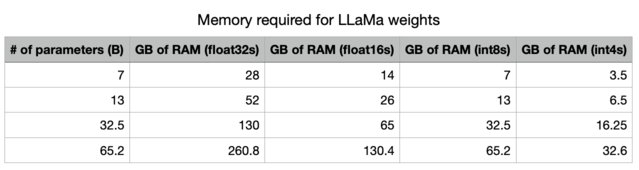

精度降低后,我们大幅减少了将大型语言模型存储在内存中所需的内存空间。

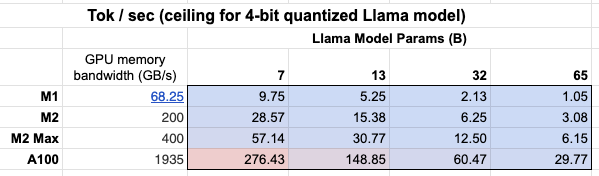

此外,我们还可以看到GPU内存带宽的重要性 表格!

由于更大的GPU内存带宽,Mac M2 Max在推理速度上比M1快5到6倍。

格式化提示

一些提供方具有 聊天模型 包装器,可自动处理针对您所使用特定本地模型的输入提示格式。然而,如果您使用 文本输入/文本输出 LLM 包装器来调用本地模型,可能需要使用专为您的特定模型定制的提示。

这可能需要包含特殊标记。 以下是 LLaMA 2 的示例。

快速入门

Ollama 是在 macOS 上轻松运行推理的一种方法。

使用说明 此处 提供了详细信息,我们在此进行总结:

%pip install -qU langchain_ollama

from langchain_ollama import OllamaLLM

llm = OllamaLLM(model="llama3.1:8b")

llm.invoke("The first man on the moon was ...")

'...Neil Armstrong!\n\nOn July 20, 1969, Neil Armstrong became the first person to set foot on the lunar surface, famously declaring "That\'s one small step for man, one giant leap for mankind" as he stepped off the lunar module Eagle onto the Moon\'s surface.\n\nWould you like to know more about the Apollo 11 mission or Neil Armstrong\'s achievements?'

流式输出正在生成的标记:

for chunk in llm.stream("The first man on the moon was ..."):

print(chunk, end="|", flush=True)

...|

``````output

Neil| Armstrong|,| an| American| astronaut|.| He| stepped| out| of| the| lunar| module| Eagle| and| onto| the| surface| of| the| Moon| on| July| |20|,| |196|9|,| famously| declaring|:| "|That|'s| one| small| step| for| man|,| one| giant| leap| for| mankind|."||

Ollama 还包含一个聊天模型包装器,用于处理对话轮次的格式化:

from langchain_ollama import ChatOllama

chat_model = ChatOllama(model="llama3.1:8b")

chat_model.invoke("Who was the first man on the moon?")

AIMessage(content='The answer is a historic one!\n\nThe first man to walk on the Moon was Neil Armstrong, an American astronaut and commander of the Apollo 11 mission. On July 20, 1969, Armstrong stepped out of the lunar module Eagle onto the surface of the Moon, famously declaring:\n\n"That\'s one small step for man, one giant leap for mankind."\n\nArmstrong was followed by fellow astronaut Edwin "Buzz" Aldrin, who also walked on the Moon during the mission. Michael Collins remained in orbit around the Moon in the command module Columbia.\n\nNeil Armstrong passed away on August 25, 2012, but his legacy as a pioneering astronaut and engineer continues to inspire people around the world!', response_metadata={'model': 'llama3.1:8b', 'created_at': '2024-08-01T00:38:29.176717Z', 'message': {'role': 'assistant', 'content': ''}, 'done_reason': 'stop', 'done': True, 'total_duration': 10681861417, 'load_duration': 34270292, 'prompt_eval_count': 19, 'prompt_eval_duration': 6209448000, 'eval_count': 141, 'eval_duration': 4432022000}, id='run-7bed57c5-7f54-4092-912c-ae49073dcd48-0', usage_metadata={'input_tokens': 19, 'output_tokens': 141, 'total_tokens': 160})

环境

在本地运行模型时,推理速度是一个挑战(见上文)。

为了降低延迟,最好在本地GPU上运行模型,许多消费级笔记本电脑都配备了GPU 例如苹果设备。

即使使用GPU,可用的GPU内存带宽(如上所述)也很重要。

在Apple Silicon GPU上运行

Ollama 和 llamafile 将自动在 Apple 设备上使用 GPU。

其他框架要求用户设置环境以使用苹果GPU。

例如,llama.cpp Python绑定可以通过Metal配置为使用GPU。

Metal 是由苹果公司开发的图形和计算 API,可提供对 GPU 的近乎直接的访问。

特别是,请确保 conda 使用了您创建的正确虚拟环境(miniforge3)。

例如对我而言:

conda activate /Users/rlm/miniforge3/envs/llama

在确认以上内容后,接下来:

CMAKE_ARGS="-DLLAMA_METAL=on" FORCE_CMAKE=1 pip install -U llama-cpp-python --no-cache-dir

LLMs

获取量化模型权重有多种方式。

HuggingFace- 有许多量化模型可供下载,并可使用如llama.cpp等框架运行。您还可以从 HuggingFace 下载llamafile格式 的模型。gpt4all- 模型浏览器提供可下载的指标排行榜及相关的量化模型Ollama- 可通过pull直接访问多个模型

Ollama

使用 Ollama,通过 ollama pull <model family>:<tag> 获取模型:

- 例如,对于 Llama 2 7b:

ollama pull llama2将下载模型的最基本版本(例如,参数最少且为 4 位量化) - 我们也可以从 模型列表 中指定特定版本,例如

ollama pull llama2:13b - 在 API 参考页面 上查看完整的参数列表

llm = OllamaLLM(model="llama2:13b")

llm.invoke("The first man on the moon was ... think step by step")

' Sure! Here\'s the answer, broken down step by step:\n\nThe first man on the moon was... Neil Armstrong.\n\nHere\'s how I arrived at that answer:\n\n1. The first manned mission to land on the moon was Apollo 11.\n2. The mission included three astronauts: Neil Armstrong, Edwin "Buzz" Aldrin, and Michael Collins.\n3. Neil Armstrong was the mission commander and the first person to set foot on the moon.\n4. On July 20, 1969, Armstrong stepped out of the lunar module Eagle and onto the moon\'s surface, famously declaring "That\'s one small step for man, one giant leap for mankind."\n\nSo, the first man on the moon was Neil Armstrong!'

Llama.cpp

Llama.cpp 与 广泛的模型 兼容。

例如,下面我们在 llama2-13b 上运行推理,使用从 HuggingFace 下载的 4 位量化模型。

如上所述,请参阅API参考以获取完整的参数列表。

从 llama.cpp API 参考文档 中,有几点值得特别说明:

n_gpu_layers: 要加载到GPU内存中的层数

- 值:1

- 含义:仅将模型的一层加载到GPU内存中(1通常已足够)。

n_batch: 模型应并行处理的标记数量

- 值:n_batch

- 含义:建议选择1到n_ctx之间的值(本例中n_ctx设置为2048)

n_ctx: 令牌上下文窗口

- 值:2048

- 含义:模型一次将考虑2048个标记的窗口

f16_kv: 模型是否应使用半精度存储键/值缓存

- 值:真

- 含义:该模型将使用半精度,这可以更节省内存;但 Metal 仅支持 True。

%env CMAKE_ARGS="-DLLAMA_METAL=on"

%env FORCE_CMAKE=1

%pip install --upgrade --quiet llama-cpp-python --no-cache-dirclear

from langchain_community.llms import LlamaCpp

from langchain_core.callbacks import CallbackManager, StreamingStdOutCallbackHandler

llm = LlamaCpp(

model_path="/Users/rlm/Desktop/Code/llama.cpp/models/openorca-platypus2-13b.gguf.q4_0.bin",

n_gpu_layers=1,

n_batch=512,

n_ctx=2048,

f16_kv=True,

callback_manager=CallbackManager([StreamingStdOutCallbackHandler()]),

verbose=True,

)

控制台日志将显示以下内容,以表明已按上述步骤正确启用Metal:

ggml_metal_init: allocating

ggml_metal_init: using MPS

llm.invoke("The first man on the moon was ... Let's think step by step")

Llama.generate: prefix-match hit

``````output

and use logical reasoning to figure out who the first man on the moon was.

Here are some clues:

1. The first man on the moon was an American.

2. He was part of the Apollo 11 mission.

3. He stepped out of the lunar module and became the first person to set foot on the moon's surface.

4. His last name is Armstrong.

Now, let's use our reasoning skills to figure out who the first man on the moon was. Based on clue #1, we know that the first man on the moon was an American. Clue #2 tells us that he was part of the Apollo 11 mission. Clue #3 reveals that he was the first person to set foot on the moon's surface. And finally, clue #4 gives us his last name: Armstrong.

Therefore, the first man on the moon was Neil Armstrong!

``````output

llama_print_timings: load time = 9623.21 ms

llama_print_timings: sample time = 143.77 ms / 203 runs ( 0.71 ms per token, 1412.01 tokens per second)

llama_print_timings: prompt eval time = 485.94 ms / 7 tokens ( 69.42 ms per token, 14.40 tokens per second)

llama_print_timings: eval time = 6385.16 ms / 202 runs ( 31.61 ms per token, 31.64 tokens per second)

llama_print_timings: total time = 7279.28 ms

" and use logical reasoning to figure out who the first man on the moon was.\n\nHere are some clues:\n\n1. The first man on the moon was an American.\n2. He was part of the Apollo 11 mission.\n3. He stepped out of the lunar module and became the first person to set foot on the moon's surface.\n4. His last name is Armstrong.\n\nNow, let's use our reasoning skills to figure out who the first man on the moon was. Based on clue #1, we know that the first man on the moon was an American. Clue #2 tells us that he was part of the Apollo 11 mission. Clue #3 reveals that he was the first person to set foot on the moon's surface. And finally, clue #4 gives us his last name: Armstrong.\nTherefore, the first man on the moon was Neil Armstrong!"

GPT4All

我们可以使用从 GPT4All 模型探索器下载的模型权重。

与上图所示类似,我们可以运行推理并使用API参考来设置感兴趣的参数。

%pip install gpt4all

from langchain_community.llms import GPT4All

llm = GPT4All(

model="/Users/rlm/Desktop/Code/gpt4all/models/nous-hermes-13b.ggmlv3.q4_0.bin"

)

llm.invoke("The first man on the moon was ... Let's think step by step")

".\n1) The United States decides to send a manned mission to the moon.2) They choose their best astronauts and train them for this specific mission.3) They build a spacecraft that can take humans to the moon, called the Lunar Module (LM).4) They also create a larger spacecraft, called the Saturn V rocket, which will launch both the LM and the Command Service Module (CSM), which will carry the astronauts into orbit.5) The mission is planned down to the smallest detail: from the trajectory of the rockets to the exact movements of the astronauts during their moon landing.6) On July 16, 1969, the Saturn V rocket launches from Kennedy Space Center in Florida, carrying the Apollo 11 mission crew into space.7) After one and a half orbits around the Earth, the LM separates from the CSM and begins its descent to the moon's surface.8) On July 20, 1969, at 2:56 pm EDT (GMT-4), Neil Armstrong becomes the first man on the moon. He speaks these"

llamafile

在本地运行大型语言模型最简单的方法之一是使用 llamafile。您需要做的只是:

- 从 HuggingFace 下载一个 llamafile

- 使文件可执行

- 运行文件

llamafiles 将模型权重和一个 专门编译的 版本的 llama.cpp 打包成一个文件,可以在大多数计算机上运行,无需任何额外依赖。它们还附带一个嵌入式推理服务器,提供一个 API 用于与您的模型进行交互。

这是一个简单的 bash 脚本,展示了全部 3 个设置步骤:

# Download a llamafile from HuggingFace

wget https://huggingface.co/jartine/TinyLlama-1.1B-Chat-v1.0-GGUF/resolve/main/TinyLlama-1.1B-Chat-v1.0.Q5_K_M.llamafile

# Make the file executable. On Windows, instead just rename the file to end in ".exe".

chmod +x TinyLlama-1.1B-Chat-v1.0.Q5_K_M.llamafile

# Start the model server. Listens at http://localhost:8080 by default.

./TinyLlama-1.1B-Chat-v1.0.Q5_K_M.llamafile --server --nobrowser

完成上述设置步骤后,您就可以使用 LangChain 与您的模型进行交互了:

from langchain_community.llms.llamafile import Llamafile

llm = Llamafile()

llm.invoke("The first man on the moon was ... Let's think step by step.")

"\nFirstly, let's imagine the scene where Neil Armstrong stepped onto the moon. This happened in 1969. The first man on the moon was Neil Armstrong. We already know that.\n2nd, let's take a step back. Neil Armstrong didn't have any special powers. He had to land his spacecraft safely on the moon without injuring anyone or causing any damage. If he failed to do this, he would have been killed along with all those people who were on board the spacecraft.\n3rd, let's imagine that Neil Armstrong successfully landed his spacecraft on the moon and made it back to Earth safely. The next step was for him to be hailed as a hero by his people back home. It took years before Neil Armstrong became an American hero.\n4th, let's take another step back. Let's imagine that Neil Armstrong wasn't hailed as a hero, and instead, he was just forgotten. This happened in the 1970s. Neil Armstrong wasn't recognized for his remarkable achievement on the moon until after he died.\n5th, let's take another step back. Let's imagine that Neil Armstrong didn't die in the 1970s and instead, lived to be a hundred years old. This happened in 2036. In the year 2036, Neil Armstrong would have been a centenarian.\nNow, let's think about the present. Neil Armstrong is still alive. He turned 95 years old on July 20th, 2018. If he were to die now, his achievement of becoming the first human being to set foot on the moon would remain an unforgettable moment in history.\nI hope this helps you understand the significance and importance of Neil Armstrong's achievement on the moon!"

提示

某些大型语言模型会从特定提示中获益。

例如,LLaMA 将使用 特殊标记。

我们可以使用 ConditionalPromptSelector 根据模型类型设置提示。

# Set our LLM

llm = LlamaCpp(

model_path="/Users/rlm/Desktop/Code/llama.cpp/models/openorca-platypus2-13b.gguf.q4_0.bin",

n_gpu_layers=1,

n_batch=512,

n_ctx=2048,

f16_kv=True,

callback_manager=CallbackManager([StreamingStdOutCallbackHandler()]),

verbose=True,

)

根据模型版本设置相关的提示。

from langchain.chains.prompt_selector import ConditionalPromptSelector

from langchain_core.prompts import PromptTemplate

DEFAULT_LLAMA_SEARCH_PROMPT = PromptTemplate(

input_variables=["question"],

template="""<<SYS>> \n You are an assistant tasked with improving Google search \

results. \n <</SYS>> \n\n [INST] Generate THREE Google search queries that \

are similar to this question. The output should be a numbered list of questions \

and each should have a question mark at the end: \n\n {question} [/INST]""",

)

DEFAULT_SEARCH_PROMPT = PromptTemplate(

input_variables=["question"],

template="""You are an assistant tasked with improving Google search \

results. Generate THREE Google search queries that are similar to \

this question. The output should be a numbered list of questions and each \

should have a question mark at the end: {question}""",

)

QUESTION_PROMPT_SELECTOR = ConditionalPromptSelector(

default_prompt=DEFAULT_SEARCH_PROMPT,

conditionals=[(lambda llm: isinstance(llm, LlamaCpp), DEFAULT_LLAMA_SEARCH_PROMPT)],

)

prompt = QUESTION_PROMPT_SELECTOR.get_prompt(llm)

prompt

PromptTemplate(input_variables=['question'], output_parser=None, partial_variables={}, template='<<SYS>> \n You are an assistant tasked with improving Google search results. \n <</SYS>> \n\n [INST] Generate THREE Google search queries that are similar to this question. The output should be a numbered list of questions and each should have a question mark at the end: \n\n {question} [/INST]', template_format='f-string', validate_template=True)

# Chain

chain = prompt | llm

question = "What NFL team won the Super Bowl in the year that Justin Bieber was born?"

chain.invoke({"question": question})

Sure! Here are three similar search queries with a question mark at the end:

1. Which NBA team did LeBron James lead to a championship in the year he was drafted?

2. Who won the Grammy Awards for Best New Artist and Best Female Pop Vocal Performance in the same year that Lady Gaga was born?

3. What MLB team did Babe Ruth play for when he hit 60 home runs in a single season?

``````output

llama_print_timings: load time = 14943.19 ms

llama_print_timings: sample time = 72.93 ms / 101 runs ( 0.72 ms per token, 1384.87 tokens per second)

llama_print_timings: prompt eval time = 14942.95 ms / 93 tokens ( 160.68 ms per token, 6.22 tokens per second)

llama_print_timings: eval time = 3430.85 ms / 100 runs ( 34.31 ms per token, 29.15 tokens per second)

llama_print_timings: total time = 18578.26 ms

' Sure! Here are three similar search queries with a question mark at the end:\n\n1. Which NBA team did LeBron James lead to a championship in the year he was drafted?\n2. Who won the Grammy Awards for Best New Artist and Best Female Pop Vocal Performance in the same year that Lady Gaga was born?\n3. What MLB team did Babe Ruth play for when he hit 60 home runs in a single season?'

我们还可以使用 LangChain 提示词中心来获取和/或存储特定于模型的提示。

这将与您的 LangSmith API密钥 一起使用。

例如,这里是针对带有 LLaMA 特定标记的 RAG 的提示。

应用场景

使用由上述模型之一创建的 llm,您可以将其用于 多种用途。

例如,您可以使用此处演示的聊天模型来实现一个 RAG 应用程序。

通常情况下,本地大语言模型的使用场景至少由两个因素驱动:

Privacy: 用户不希望分享的私有数据(例如日记等)Cost: 文本预处理(提取/标记)、摘要生成和代理模拟是消耗大量令牌的任务

此外,此处提供了关于微调的概述,可以利用开源大语言模型。