总结文本

本教程演示如何使用内置链和 LangGraph 进行文本摘要。

此页面的上一版本展示了旧版的链式结构StuffDocumentsChain、MapReduceDocumentsChain和RefineDocumentsChain。有关如何使用这些抽象方法以及与本教程中演示方法的对比,请参见此处。

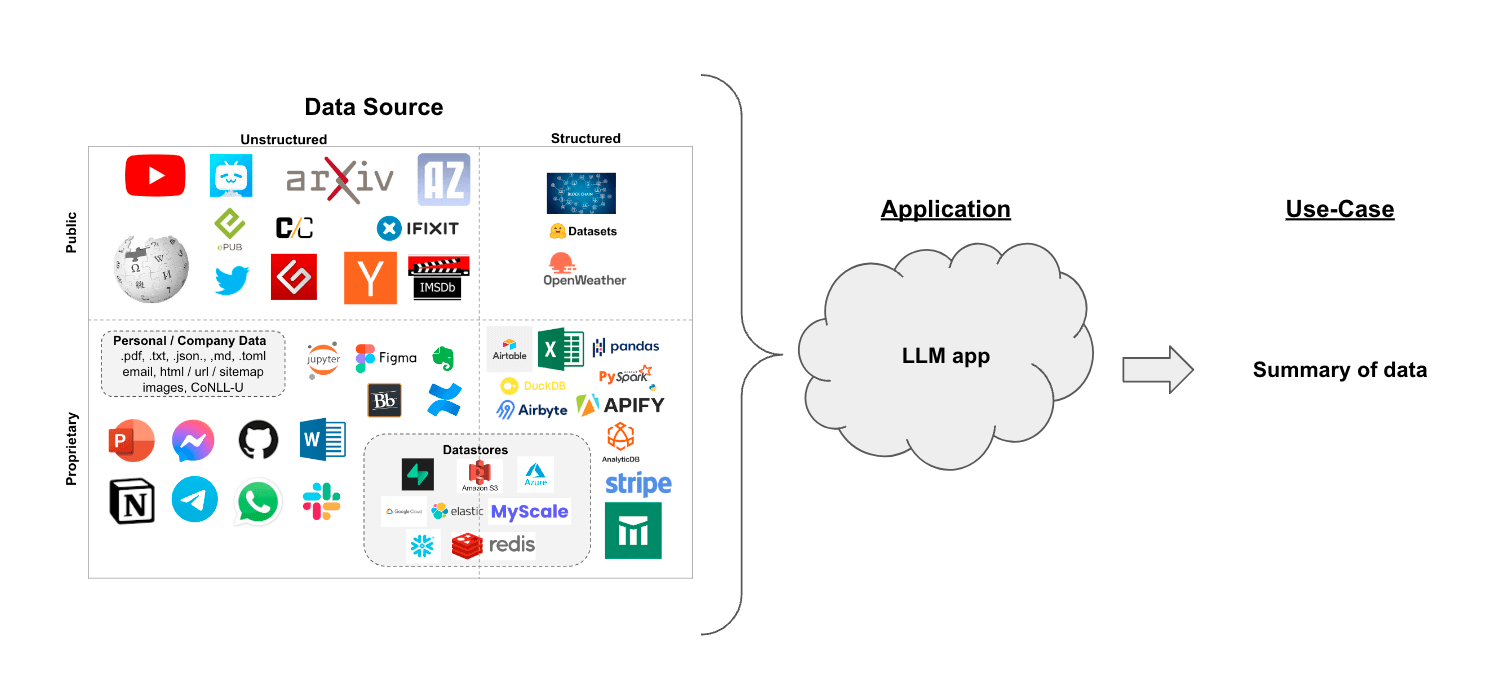

假设你有一组文档(PDF、Notion 页面、客户问题等),并且希望对内容进行总结。

鉴于大型语言模型在理解与文本合成方面的出色能力,它们是完成此项任务的绝佳工具。

在检索增强生成的背景下,总结文本有助于从大量检索到的文档中提炼信息,为大型语言模型提供上下文。

在本教程中,我们将介绍如何使用大型语言模型(LLMs)对多份文档的内容进行摘要。

概念

我们将涵盖的概念有:

-

使用 语言模型。

-

使用 文档加载器,特别是 WebBaseLoader 从HTML网页加载内容。

-

总结或以其他方式合并文档的两种方法。

这些策略及其他策略的更短、更聚焦的指南,包括迭代优化,可以在操操作指南中找到。

设置

Jupyter Notebook

本指南(以及文档中的大多数其他指南)使用 Jupyter 笔记本,并假设读者也熟悉该工具。Jupyter 笔记本非常适合学习如何使用大语言模型系统,因为很多时候会出现问题(如输出意外、API 服务中断等),在交互式环境中逐步跟随指南操作,是更好地理解这些内容的绝佳方式。

其他教程可能最方便在 Jupyter Notebook 中运行。有关安装说明,请参见 此处。

安装

要安装 LangChain,请运行:

- Pip

- Conda

pip install langchain

conda install langchain -c conda-forge

有关详细信息,请参阅我们的 安装指南。

LangSmith

使用 LangChain 构建的许多应用程序都包含多个步骤,以及多次调用大型语言模型(LLM)。 随着这些应用程序变得越来越复杂,能够检查链或代理内部的具体情况变得至关重要。 实现这一点的最佳方式是使用 LangSmith。

在您通过上方链接注册后,请确保设置您的环境变量以开始记录追踪信息:

export LANGSMITH_TRACING="true"

export LANGSMITH_API_KEY="..."

或者,如果在笔记本中,你可以通过以下方式设置它们:

import getpass

import os

os.environ["LANGSMITH_TRACING"] = "true"

os.environ["LANGSMITH_API_KEY"] = getpass.getpass()

概览

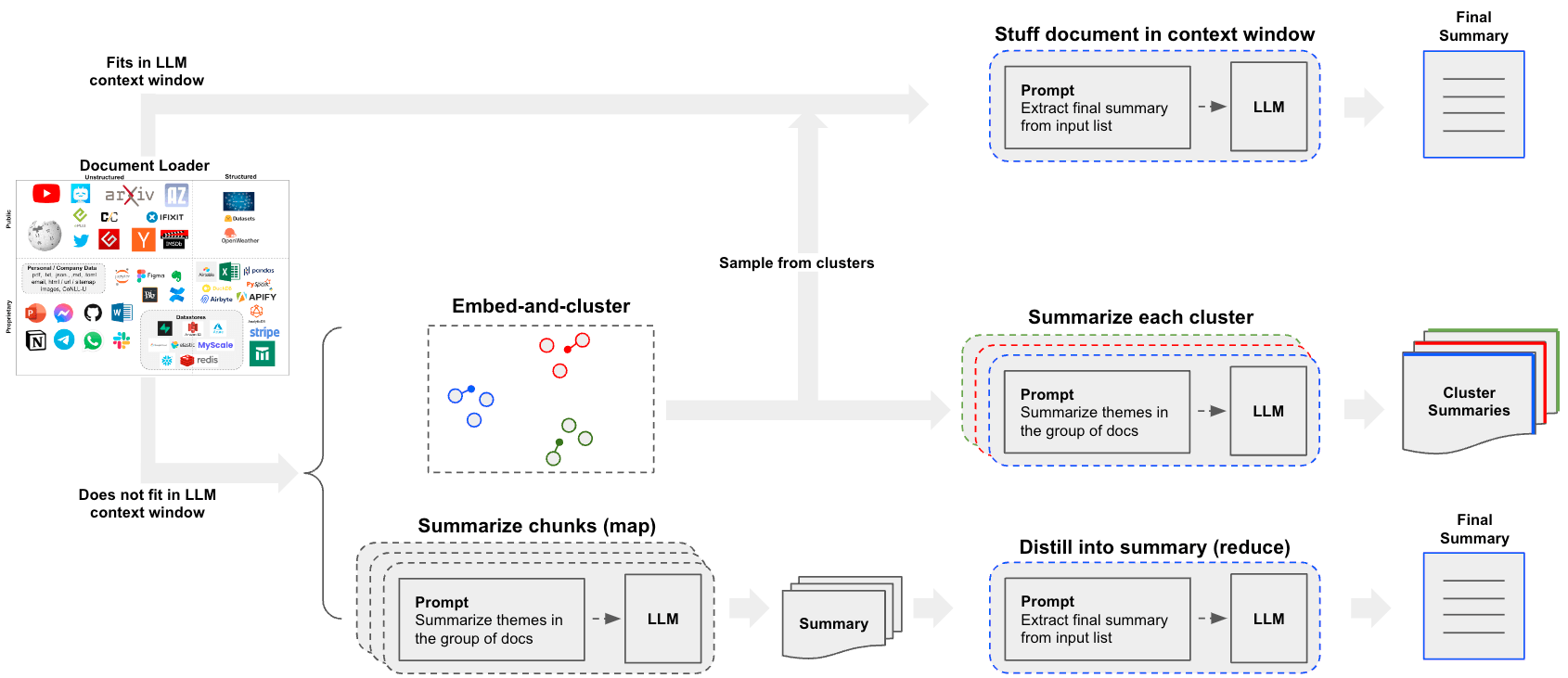

构建摘要生成器的一个核心问题是:如何将文档传递到大型语言模型的上下文窗口中。针对这一问题,常见的两种方法是:

-

Stuff: 仅将所有文档内容简单地放入一个提示中。这是最简单的做法(有关用于此方法的create_stuff_documents_chain构造函数的更多信息,请参见此处)。 -

Map-reduce: 在“映射”步骤中分别总结每个文档,然后将这些摘要合并为最终摘要(有关此方法使用的MapReduceDocumentsChain的更多信息,请参见 这里)。

请注意,当理解子文档时不需要依赖前面的上下文时,map-reduce 方法尤其有效。例如,在总结大量较短文档的语料库时。在其他情况下,如总结一部小说或具有内在顺序的文本集合时,迭代优化 可能更为有效。

设置

首先设置环境变量并安装依赖包:

%pip install --upgrade --quiet tiktoken langchain langgraph beautifulsoup4 langchain-community

# Set env var OPENAI_API_KEY or load from a .env file

# import dotenv

# dotenv.load_dotenv()

import os

os.environ["LANGSMITH_TRACING"] = "true"

首先我们加载文档。我们将使用 WebBaseLoader 加载一篇博客文章:

from langchain_community.document_loaders import WebBaseLoader

loader = WebBaseLoader("https://lilianweng.github.io/posts/2023-06-23-agent/")

docs = loader.load()

接下来,我们选择一个大语言模型:

pip install -qU "langchain[openai]"

import getpass

import os

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass("Enter API key for OpenAI: ")

from langchain.chat_models import init_chat_model

llm = init_chat_model("gpt-4o-mini", model_provider="openai")

内容:通过一次LLM调用进行总结

我们可以使用 create_stuff_documents_chain,尤其是在使用更大上下文窗口模型时,例如:

- 128k token OpenAI

gpt-4o - 20万个token Anthropic

claude-3-5-sonnet-20240620

该链将接收一个文档列表,将所有文档插入到提示中,并将该提示传递给大语言模型:

from langchain.chains.combine_documents import create_stuff_documents_chain

from langchain.chains.llm import LLMChain

from langchain_core.prompts import ChatPromptTemplate

# Define prompt

prompt = ChatPromptTemplate.from_messages(

[("system", "Write a concise summary of the following:\\n\\n{context}")]

)

# Instantiate chain

chain = create_stuff_documents_chain(llm, prompt)

# Invoke chain

result = chain.invoke({"context": docs})

print(result)

The article "LLM Powered Autonomous Agents" by Lilian Weng discusses the development and capabilities of autonomous agents powered by large language models (LLMs). It outlines a system architecture that includes three main components: Planning, Memory, and Tool Use.

1. **Planning** involves task decomposition, where complex tasks are broken down into manageable subgoals, and self-reflection, allowing agents to learn from past actions to improve future performance. Techniques like Chain of Thought (CoT) and Tree of Thoughts (ToT) are highlighted for enhancing reasoning and planning.

2. **Memory** is categorized into short-term and long-term memory, with mechanisms for fast retrieval using Maximum Inner Product Search (MIPS) algorithms. This allows agents to retain and recall information effectively.

3. **Tool Use** enables agents to interact with external APIs and tools, enhancing their capabilities beyond the limitations of their training data. Examples include MRKL systems and frameworks like HuggingGPT, which facilitate task planning and execution.

The article also addresses challenges such as finite context length, difficulties in long-term planning, and the reliability of natural language interfaces. It concludes with case studies demonstrating the practical applications of these concepts in scientific discovery and interactive simulations. Overall, the article emphasizes the potential of LLMs as powerful problem solvers in autonomous agent systems.

流式传输

请注意,我们也可以逐个令牌流式输出结果:

for token in chain.stream({"context": docs}):

print(token, end="|")

|The| article| "|LL|M| Powered| Autonomous| Agents|"| by| Lil|ian| W|eng| discusses| the| development| and| capabilities| of| autonomous| agents| powered| by| large| language| models| (|LL|Ms|).| It| outlines| a| system| architecture| that| includes| three| main| components|:| Planning|,| Memory|,| and| Tool| Use|.|

|1|.| **|Planning|**| involves| task| decomposition|,| where| complex| tasks| are| broken| down| into| manageable| sub|go|als|,| and| self|-ref|lection|,| allowing| agents| to| learn| from| past| actions| to| improve| future| performance|.| Techniques| like| Chain| of| Thought| (|Co|T|)| and| Tree| of| Thoughts| (|To|T|)| are| highlighted| for| enhancing| reasoning| and| planning|.

|2|.| **|Memory|**| is| categorized| into| short|-term| and| long|-term| memory|,| with| mechanisms| for| fast| retrieval| using| Maximum| Inner| Product| Search| (|M|IPS|)| algorithms|.| This| allows| agents| to| retain| and| recall| information| effectively|.

|3|.| **|Tool| Use|**| emphasizes| the| integration| of| external| APIs| and| tools| to| extend| the| capabilities| of| L|LM|s|,| enabling| them| to| perform| tasks| beyond| their| inherent| limitations|.| Examples| include| MR|KL| systems| and| frameworks| like| Hug|ging|GPT|,| which| facilitate| task| planning| and| execution|.

|The| article| also| addresses| challenges| such| as| finite| context| length|,| difficulties| in| long|-term| planning|,| and| the| reliability| of| natural| language| interfaces|.| It| concludes| with| case| studies| demonstrating| the| practical| applications| of| L|LM|-powered| agents| in| scientific| discovery| and| interactive| simulations|.| Overall|,| the| piece| illustrates| the| potential| of| L|LM|s| as| general| problem| sol|vers| and| their| evolving| role| in| autonomous| systems|.||

深入探索

- 您可以轻松地自定义提示。

- 您可以轻松通过

llm参数尝试不同的大型语言模型(例如,Claude)。

Map-Reduce:通过并行化总结长文本

让我们来解析一下地图归约方法。首先,我们将使用大型语言模型(LLM)将每份文档映射为一个独立的摘要。然后,我们将这些摘要进行归约或合并,生成一个单一的全局摘要。

请注意,映射步骤通常会对输入文档进行并行处理。

LangGraph,基于 langchain-core 构建,支持 映射-归约 工作流,非常适合解决此类问题:

- LangGraph 允许对各个步骤(如连续的摘要生成)进行流式处理,从而实现对执行过程的更精细控制;

- LangGraph 的 检查点 支持错误恢复,可扩展至人机协作工作流,并更轻松地集成到对话式应用中。

- LangGraph 的实现非常易于修改和扩展,如下所述。

映射

首先,我们定义与映射步骤相关的提示。可以使用上面 stuff 方法中的相同摘要提示:

from langchain_core.prompts import ChatPromptTemplate

map_prompt = ChatPromptTemplate.from_messages(

[("system", "Write a concise summary of the following:\\n\\n{context}")]

)

我们还可以使用提示词库来存储和获取提示词。

这将与您的 LangSmith API密钥 一起使用。

例如,参见地图提示 这里。

from langchain import hub

map_prompt = hub.pull("rlm/map-prompt")

减少

我们还定义了一个提示,该提示接收文档映射结果,并将其简化为单一输出。

# Also available via the hub: `hub.pull("rlm/reduce-prompt")`

reduce_template = """

The following is a set of summaries:

{docs}

Take these and distill it into a final, consolidated summary

of the main themes.

"""

reduce_prompt = ChatPromptTemplate([("human", reduce_template)])

通过 LangGraph 进行编排

下面我们实现一个简单的应用程序,该程序将上述提示用于对文档列表的摘要步骤进行映射,然后进行归约。

Map-reduce 流程在文本长度远超大型语言模型(LLM)上下文窗口时尤为有用。对于长文本,我们需要一种机制,确保在 reduce 步骤中需要总结的上下文不会超过模型的上下文窗口大小。在此,我们实现了一种递归的“压缩”总结方法:根据标记数限制对输入进行分块,并为各分块生成摘要。此步骤重复进行,直到所有摘要的总长度达到期望的限制,从而实现对任意长度文本的总结。

首先,我们将博客文章拆分成更小的“子文档”以进行映射:

from langchain_text_splitters import CharacterTextSplitter

text_splitter = CharacterTextSplitter.from_tiktoken_encoder(

chunk_size=1000, chunk_overlap=0

)

split_docs = text_splitter.split_documents(docs)

print(f"Generated {len(split_docs)} documents.")

Created a chunk of size 1003, which is longer than the specified 1000

``````output

Generated 14 documents.

接下来,我们定义图结构。请注意,我们人为地设定了一个1000个标记的最大长度,以说明“折叠”步骤。

import operator

from typing import Annotated, List, Literal, TypedDict

from langchain.chains.combine_documents.reduce import (

acollapse_docs,

split_list_of_docs,

)

from langchain_core.documents import Document

from langgraph.constants import Send

from langgraph.graph import END, START, StateGraph

token_max = 1000

def length_function(documents: List[Document]) -> int:

"""Get number of tokens for input contents."""

return sum(llm.get_num_tokens(doc.page_content) for doc in documents)

# This will be the overall state of the main graph.

# It will contain the input document contents, corresponding

# summaries, and a final summary.

class OverallState(TypedDict):

# Notice here we use the operator.add

# This is because we want combine all the summaries we generate

# from individual nodes back into one list - this is essentially

# the "reduce" part

contents: List[str]

summaries: Annotated[list, operator.add]

collapsed_summaries: List[Document]

final_summary: str

# This will be the state of the node that we will "map" all

# documents to in order to generate summaries

class SummaryState(TypedDict):

content: str

# Here we generate a summary, given a document

async def generate_summary(state: SummaryState):

prompt = map_prompt.invoke(state["content"])

response = await llm.ainvoke(prompt)

return {"summaries": [response.content]}

# Here we define the logic to map out over the documents

# We will use this an edge in the graph

def map_summaries(state: OverallState):

# We will return a list of `Send` objects

# Each `Send` object consists of the name of a node in the graph

# as well as the state to send to that node

return [

Send("generate_summary", {"content": content}) for content in state["contents"]

]

def collect_summaries(state: OverallState):

return {

"collapsed_summaries": [Document(summary) for summary in state["summaries"]]

}

async def _reduce(input: dict) -> str:

prompt = reduce_prompt.invoke(input)

response = await llm.ainvoke(prompt)

return response.content

# Add node to collapse summaries

async def collapse_summaries(state: OverallState):

doc_lists = split_list_of_docs(

state["collapsed_summaries"], length_function, token_max

)

results = []

for doc_list in doc_lists:

results.append(await acollapse_docs(doc_list, _reduce))

return {"collapsed_summaries": results}

# This represents a conditional edge in the graph that determines

# if we should collapse the summaries or not

def should_collapse(

state: OverallState,

) -> Literal["collapse_summaries", "generate_final_summary"]:

num_tokens = length_function(state["collapsed_summaries"])

if num_tokens > token_max:

return "collapse_summaries"

else:

return "generate_final_summary"

# Here we will generate the final summary

async def generate_final_summary(state: OverallState):

response = await _reduce(state["collapsed_summaries"])

return {"final_summary": response}

# Construct the graph

# Nodes:

graph = StateGraph(OverallState)

graph.add_node("generate_summary", generate_summary) # same as before

graph.add_node("collect_summaries", collect_summaries)

graph.add_node("collapse_summaries", collapse_summaries)

graph.add_node("generate_final_summary", generate_final_summary)

# Edges:

graph.add_conditional_edges(START, map_summaries, ["generate_summary"])

graph.add_edge("generate_summary", "collect_summaries")

graph.add_conditional_edges("collect_summaries", should_collapse)

graph.add_conditional_edges("collapse_summaries", should_collapse)

graph.add_edge("generate_final_summary", END)

app = graph.compile()

LangGraph 可以将图结构绘制出来,以帮助可视化其功能:

from IPython.display import Image

Image(app.get_graph().draw_mermaid_png())

运行应用程序时,我们可以流式输出图表以观察其执行步骤的顺序。以下我们将仅打印出每一步的名称。

请注意,由于图中存在循环,指定执行的 递归限制 可能会有所帮助。当超过指定限制时,系统将引发特定错误。

async for step in app.astream(

{"contents": [doc.page_content for doc in split_docs]},

{"recursion_limit": 10},

):

print(list(step.keys()))

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['generate_summary']

['collect_summaries']

['collapse_summaries']

['collapse_summaries']

['generate_final_summary']

print(step)

{'generate_final_summary': {'final_summary': 'The consolidated summary of the main themes from the provided documents is as follows:\n\n1. **Integration of Large Language Models (LLMs) in Autonomous Agents**: The documents explore the evolving role of LLMs in autonomous systems, emphasizing their enhanced reasoning and acting capabilities through methodologies that incorporate structured planning, memory systems, and tool use.\n\n2. **Core Components of Autonomous Agents**:\n - **Planning**: Techniques like task decomposition (e.g., Chain of Thought) and external classical planners are utilized to facilitate long-term planning by breaking down complex tasks.\n - **Memory**: The memory system is divided into short-term (in-context learning) and long-term memory, with parallels drawn between human memory and machine learning to improve agent performance.\n - **Tool Use**: Agents utilize external APIs and algorithms to enhance problem-solving abilities, exemplified by frameworks like HuggingGPT that manage task workflows.\n\n3. **Neuro-Symbolic Architectures**: The integration of MRKL (Modular Reasoning, Knowledge, and Language) systems combines neural and symbolic expert modules with LLMs, addressing challenges in tasks such as verbal math problem-solving.\n\n4. **Specialized Applications**: Case studies, such as ChemCrow and projects in anticancer drug discovery, demonstrate the advantages of LLMs augmented with expert tools in specialized domains.\n\n5. **Challenges and Limitations**: The documents highlight challenges such as hallucination in model outputs and the finite context length of LLMs, which affects their ability to incorporate historical information and perform self-reflection. Techniques like Chain of Hindsight and Algorithm Distillation are discussed to enhance model performance through iterative learning.\n\n6. **Structured Software Development**: A systematic approach to creating Python software projects is emphasized, focusing on defining core components, managing dependencies, and adhering to best practices for documentation.\n\nOverall, the integration of structured planning, memory systems, and advanced tool use aims to enhance the capabilities of LLM-powered autonomous agents while addressing the challenges and limitations these technologies face in real-world applications.'}}

在相应的 LangSmith 跟踪 中,我们可以看到各个 LLM 调用,这些调用按其各自的节点分组。

深入探索

定制化

- 如上所示,您可以自定义映射和归约阶段的大型语言模型(LLMs)和提示词。

真实应用场景

- 查看 此博客文章,了解关于分析用户互动(关于LangChain文档的问题)的案例研究!

- 博客文章及相关的 仓库 还介绍了聚类作为一种摘要方法。

- 这开辟了另一种路径,超越了仅使用

stuff或map-reduce的方法,值得考虑。

下一步

我们鼓励您查看 操操作指南 以获取更多详细信息:

以及其他概念。