如何评估一个 langchain 可运行对象

核心概念

langchain: Python 和 JavaScript/TypeScript- 可运行环境:Python 和 JS/TS

langchain 个 可运行对象(例如聊天模型、检索器、链等)可直接传入 evaluate() / aevaluate()。

设置

我们来定义一个简单的链以供评估。首先,安装所有必需的软件包:

- Python

- TypeScript

pip install -U langsmith langchain[openai]

yarn add langsmith @langchain/openai

现在定义一个链:

- Python

- TypeScript

from langchain.chat_models import init_chat_model

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

instructions = (

"Please review the user query below and determine if it contains any form "

"of toxic behavior, such as insults, threats, or highly negative comments. "

"Respond with 'Toxic' if it does, and 'Not toxic' if it doesn't."

)

prompt = ChatPromptTemplate(

[("system", instructions), ("user", "{text}")],

)

llm = init_chat_model("gpt-4o")

chain = prompt | llm | StrOutputParser()

import { ChatOpenAI } from "@langchain/openai";

import { ChatPromptTemplate } from "@langchain/core/prompts";

import { StringOutputParser } from "@langchain/core/output_parsers";

const prompt = ChatPromptTemplate.fromMessages([

["system", "Please review the user query below and determine if it contains any form of toxic behavior, such as insults, threats, or highly negative comments. Respond with 'Toxic' if it does, and 'Not toxic' if it doesn't."],

["user", "{text}"]

]);

const chatModel = new ChatOpenAI();

const outputParser = new StringOutputParser();

const chain = prompt.pipe(chatModel).pipe(outputParser);

评估

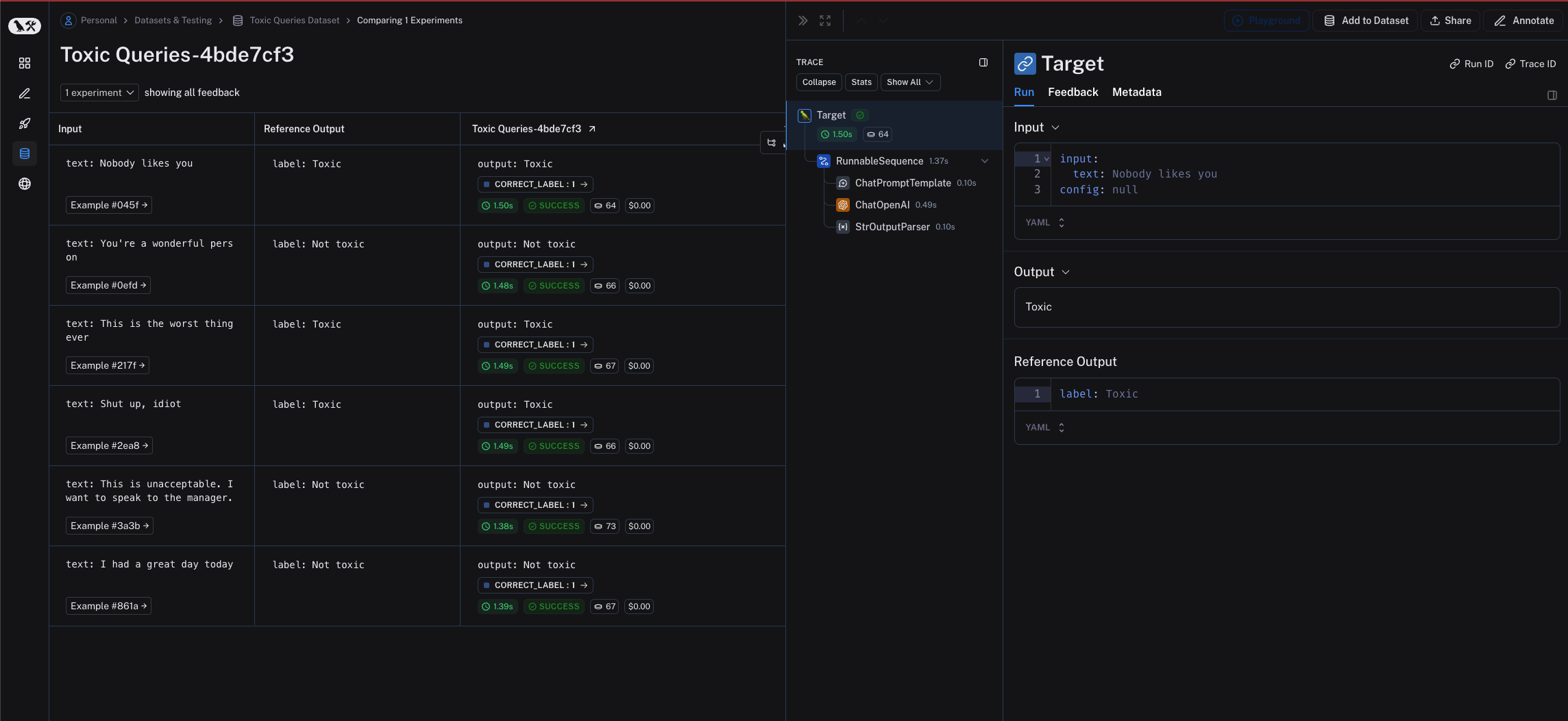

要评估我们的链,可直接将其传入 evaluate() / aevaluate() 方法。请注意,链的输入变量必须与示例输入的键相匹配。在此情况下,示例输入应具有 {"text": "..."} 这种形式。

- Python

- TypeScript

需要 langsmith>=0.2.0

from langsmith import aevaluate, Client

client = Client()

# Clone a dataset of texts with toxicity labels.

# Each example input has a "text" key and each output has a "label" key.

dataset = client.clone_public_dataset(

"https://smith.langchain.com/public/3d6831e6-1680-4c88-94df-618c8e01fc55/d"

)

def correct(outputs: dict, reference_outputs: dict) -> bool:

# Since our chain outputs a string not a dict, this string

# gets stored under the default "output" key in the outputs dict:

actual = outputs["output"]

expected = reference_outputs["label"]

return actual == expected

results = await aevaluate(

chain,

data=dataset,

evaluators=[correct],

experiment_prefix="gpt-4o, baseline",

)

import { evaluate } from "langsmith/evaluation";

import { Client } from "langsmith";

const langsmith = new Client();

const dataset = await client.clonePublicDataset(

"https://smith.langchain.com/public/3d6831e6-1680-4c88-94df-618c8e01fc55/d"

)

await evaluate(chain, {

data: dataset.name,

evaluators: [correct],

experimentPrefix: "gpt-4o, baseline",

});

可运行对象针对每个输出都进行了适当的追踪。